Just when you thought your robot vacuum was getting clever, startup Genesis AI has unveiled a system that makes it look like a pet rock. The company dropped a series of videos showcasing GENE-26.5, what it calls a “robotic brain,” performing a dizzying array of complex tasks—from cooking a meal to playing the piano and conducting lab experiments—all reportedly using the exact same AI model with no retraining.

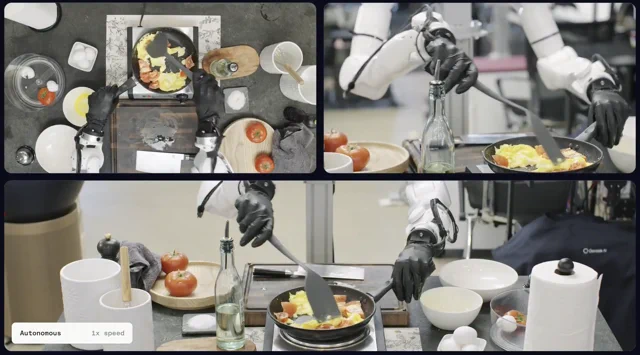

According to posts from CEO Zhou Xian, the demonstrations are all fully autonomous and run at 1x speed. One video shows the robotic system meticulously preparing a dish, a feat Xian notes they’ve “been cooking for a year,” which is either a clever pun or a sobering statement on the difficulty of the problem. Probably both. The system’s dexterity is also shown solving a Rubik’s cube and delicately handling lab equipment with millimeter-level precision.

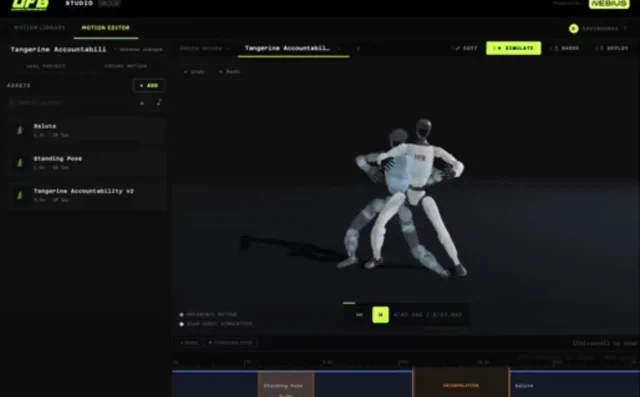

Genesis AI claims the key to this breakthrough is a complete rethinking of the robotics stack from the ground up. The system integrates four core components: a robotics-native foundation model trained on language, vision, proprioception, and tactile data; a “1:1 human-like robotic hand” for manipulation; a noninvasive data collection glove that captures motion, force, and touch from human demonstrators; and a simulator designed to drastically cut down experiment times.

Why is this important?

The holy grail of modern robotics is generalization—creating a single system that can learn and perform a wide variety of tasks without being explicitly programmed for each one. For years, the primary bottleneck has been collecting high-quality, multimodal data from humans. Genesis AI’s full-stack approach, particularly the data-gathering glove paired with a human-like hand, is a direct assault on this problem. While other companies are building massive AI models, Genesis is building the entire ecosystem to feed that model with the right data. If GENE-26.5 can truly generalize across such diverse and delicate tasks using a single set of weights, it represents a significant step toward robots that don’t just follow instructions, but actually learn skills.