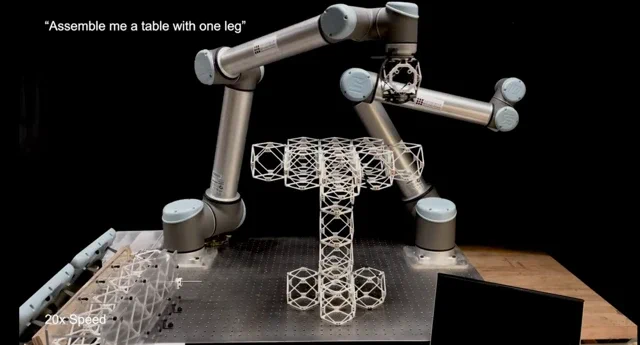

Researchers at the Massachusetts Institute of Technology (MIT) have developed a “Speech-to-Reality” system, an AI-powered workflow that allows a robotic arm to build furniture from simple voice commands. Forget your Allen keys and inscrutable diagrams; the system can assemble a stool or shelf from modular components in as little as five minutes after hearing a prompt like, “I want a simple stool.”

The project, born out of Professor Neil Gershenfeld’s legendary “How to Make Almost Anything” course, combines several rapidly advancing fields into one seamless pipeline. “We’re connecting natural language processing, 3D generative AI, and robotic assembly,” explained Alexander Htet Kyaw, an MIT graduate student who led the project at the MIT Center for Bits and Atoms (CBA). The system uses a large language model to interpret the user’s request, a 3D generative AI to create a digital model, and then a series of algorithms to plan a feasible assembly sequence for the robot.

So far, the team has successfully commanded the robot to create stools, shelves, chairs, a small table, and even a decorative dog statue. The components are designed to be disassembled and reused, offering a more sustainable alternative to traditional manufacturing. While the current connections are magnetic, the researchers plan to upgrade to more robust methods to improve the furniture’s weight-bearing capacity.

Why is this important?

This system represents a significant leap toward democratizing manufacturing. By removing the need for expertise in 3D modeling or robotics programming, it opens the door for on-demand, personalized creation for the average person. It’s more than just a novelty; it’s a powerful proof-of-concept for a future where the physical world can be manipulated as easily as the digital one. Instead of spending hours deciphering flat-pack furniture instructions, you could simply describe what you need and watch it materialize. The promise of reconfiguring your furniture with a voice command—turning a bed into a sofa, for instance—points to a future of truly adaptable living spaces.