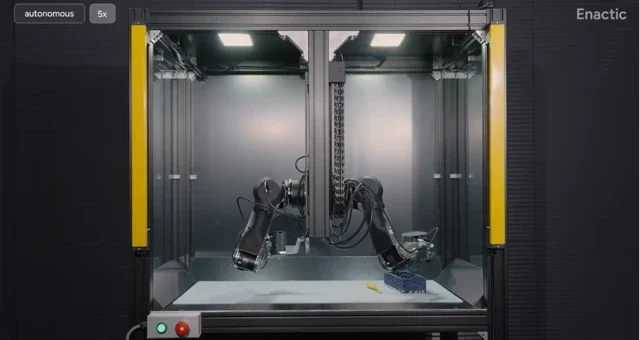

Tired of seeing amazing robot demos that feel more like sci-fi movie magic than reproducible science? You aren’t alone. The robotics world has a bit of a problem: what works in one lab often can’t be replicated in another, thanks to bespoke hardware and unique testing conditions. Enactic AI is taking a shot at fixing this with its new OpenArm 02, a fully open-source dual-arm platform designed specifically for reproducible evaluation.

The core idea is brutally simple: standardize the physical robot so that research results can actually be compared across different institutions. The OpenArm 02 is a modular, 7-DOF humanoid arm system that provides researchers with a common hardware baseline. It’s accompanied by two clever additions: the OpenArm KER, a lightweight wearable device for low-latency data acquisition, and AutoEval, a framework for running a 24/7 real-world evaluation loop with minimal human intervention. Instead of a grad student spending their weekend manually resetting a task, policies can be evaluated continuously under the exact same conditions.

The platform is more than just a blueprint; it’s a complete ecosystem. The hardware, from CAD files to electronics, is open-sourced, along with the firmware and control software. With native support for ROS 2, a nominal payload of 4.1 kg, and back-drivable actuators for safe human interaction, it’s built for serious research right out of the box.

Why is this important?

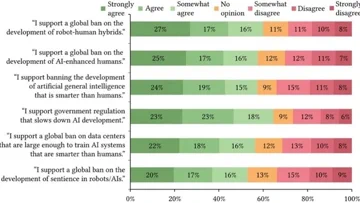

The “reproducibility crisis” is a well-documented plague in many scientific fields, and robotics is no exception. More than 70% of researchers have reportedly failed to reproduce another scientist’s experiments. By open-sourcing a capable, standardized hardware platform, Enactic AI is providing the community with a potential common language. This shifts the focus from one-off, impressive-but-isolated demos to shared, comparable benchmarks. It’s an attempt to build a foundation where new algorithms and policies can be tested on a level playing field, potentially accelerating the pace of innovation for everyone.