Just when you thought the AI world couldn’t get more saturated with “world models,” NVIDIA has dropped one that actually matters for the physical world. Meet DreamZero, a 14-billion parameter robot foundation model that can interpret a simple text command and perform a task it has never been explicitly trained for. Dubbed a “World Action Model” (WAM), its core trick is to “dream” the right future in video pixels, allowing the robot to figure out the necessary motor controls to make that future a reality.

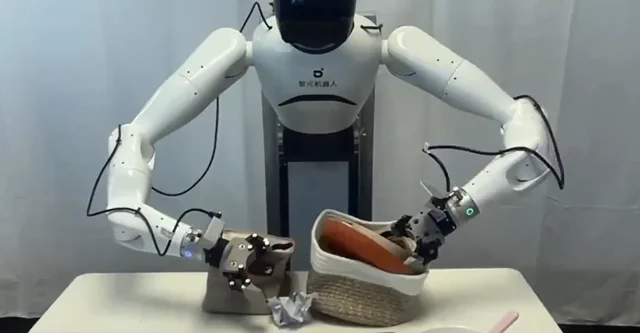

The real kicker is its dizzying adaptability. DreamZero can transfer its knowledge to a completely new, unseen robot with only about 55 demonstration trajectories, which amounts to roughly 30 minutes of a human teleoperating the machine. This is a monumental leap in efficiency compared to the hundreds of hours of demonstrations previously required. According to NVIDIA’s research, DreamZero shows more than double the performance of previous state-of-the-art Vision-Language-Action (VLA) models when generalizing to new tasks and environments. You can see the robot in action, tackling everything from untying shoelaces to shaking hands, on the official project website.

The project has yielded two key insights that challenge conventional wisdom in robot training. First, for WAMs, data diversity is far more important than endless repetitions of the same task. Second, the long-standing problem of transferring knowledge between different robot bodies (cross-embodiment) is best solved with pixels. Video, it turns out, is the universal translator, allowing for significant robot-to-robot and even human-to-robot skill transfer. The model and its weights are also being open-sourced via GitHub, allowing the entire robotics community to build upon this new foundation.

Why is this important?

DreamZero represents a fundamental shift in how we approach robot learning. Instead of painstakingly programming a robot for every conceivable task—an impossible and brittle strategy—the industry is moving towards generalist models that can learn and adapt on the fly. By learning the physics of the world through video, WAMs can generate behaviors for tasks they’ve never seen, like untying a shoelace, even if that specific skill wasn’t in the training data.

The researchers themselves have modestly compared this to the “GPT-2 era” of robotics—it’s not perfect or “GPT-3 reliable” yet, but it’s a powerful foundational step. By making robots that can learn from varied data sources, including videos of humans, and adapt to new hardware in minutes, NVIDIA is drastically lowering the barrier to deploying robots for complex, real-world applications. It’s less about teaching a robot a specific job and more about giving it the ability to learn any job.